Understanding Copilot’s AI Limitations and Responsibilities

“`html

Copilot is a generative AI tool designed to assist with programming tasks, leveraging machine learning to provide code suggestions and recommendations. Recently, Microsoft updated its terms of service, indicating that users should approach Copilot as a tool meant for “entertainment purposes only.” This statement raises important questions regarding the reliability of AI-generated outputs. In this article, we will explore the implications of Microsoft’s warning, the responsibilities of developers using Copilot, and how to effectively integrate it into their workflows.

What Is Copilot?

Copilot refers to a generative AI-powered tool developed by Microsoft that assists developers by providing code suggestions and completions. It leverages advanced machine learning algorithms to analyze code patterns and generate recommendations in real-time. With Microsoft’s recent update stating that “Copilot is for entertainment purposes only,” it is crucial for developers to understand the broader implications of relying on such tools.

Why This Matters Now

The rise of generative AI tools like Copilot has transformed the software development landscape, enabling faster coding and innovation. However, as AI systems become more integrated into development workflows, concerns about the reliability and accuracy of their outputs have gained traction. The warning from Microsoft reflects a growing awareness of these risks among AI companies themselves. Developers should care about this now because it emphasizes the need for critical thinking and validation of AI outputs, especially when deploying them in production environments.

Technical Deep Dive

Understanding the technical aspects of Copilot is essential for developers looking to leverage its capabilities effectively. Here’s how Copilot operates:

- Machine Learning Models: Copilot is built on OpenAI’s Codex, which is a descendant of the GPT-3 model. It uses natural language processing to interpret code and comments.

- Integration with IDEs: Copilot seamlessly integrates with popular Integrated Development Environments (IDEs) like Visual Studio Code, allowing developers to receive contextual suggestions as they code.

- Training on Diverse Codebases: The model has been trained on a vast corpus of publicly available code from platforms like GitHub, making it capable of understanding various programming languages and frameworks.

Here’s a simple example of how to use Copilot in a Python project:

def calculate_factorial(n):

"""Calculate the factorial of a number."""

if n == 0 or n == 1:

return 1

else:

return n * calculate_factorial(n - 1)

# Copilot may suggest the following usage:

result = calculate_factorial(5) # Should return 120

print(result)

While using Copilot, developers should always validate the suggestions against best practices, as the AI can produce incorrect or suboptimal code. Additionally, this integration can enhance productivity, but awareness of the underlying limitations is key.

Real-World Applications

1. Rapid Prototyping

Developers can use Copilot for quickly generating prototypes, allowing for faster iterations and testing of ideas. This is particularly useful in startups where speed is critical.

2. Learning Tool

New developers can leverage Copilot as a learning tool, gaining insights into coding styles, best practices, and language syntax through the suggestions it provides.

3. Code Completion

In large codebases, Copilot can assist in code completion, reducing the likelihood of errors and improving the overall quality of the code.

4. Documentation Assistance

Copilot can help generate documentation by suggesting comments and explanations for code snippets, which enhances code maintainability.

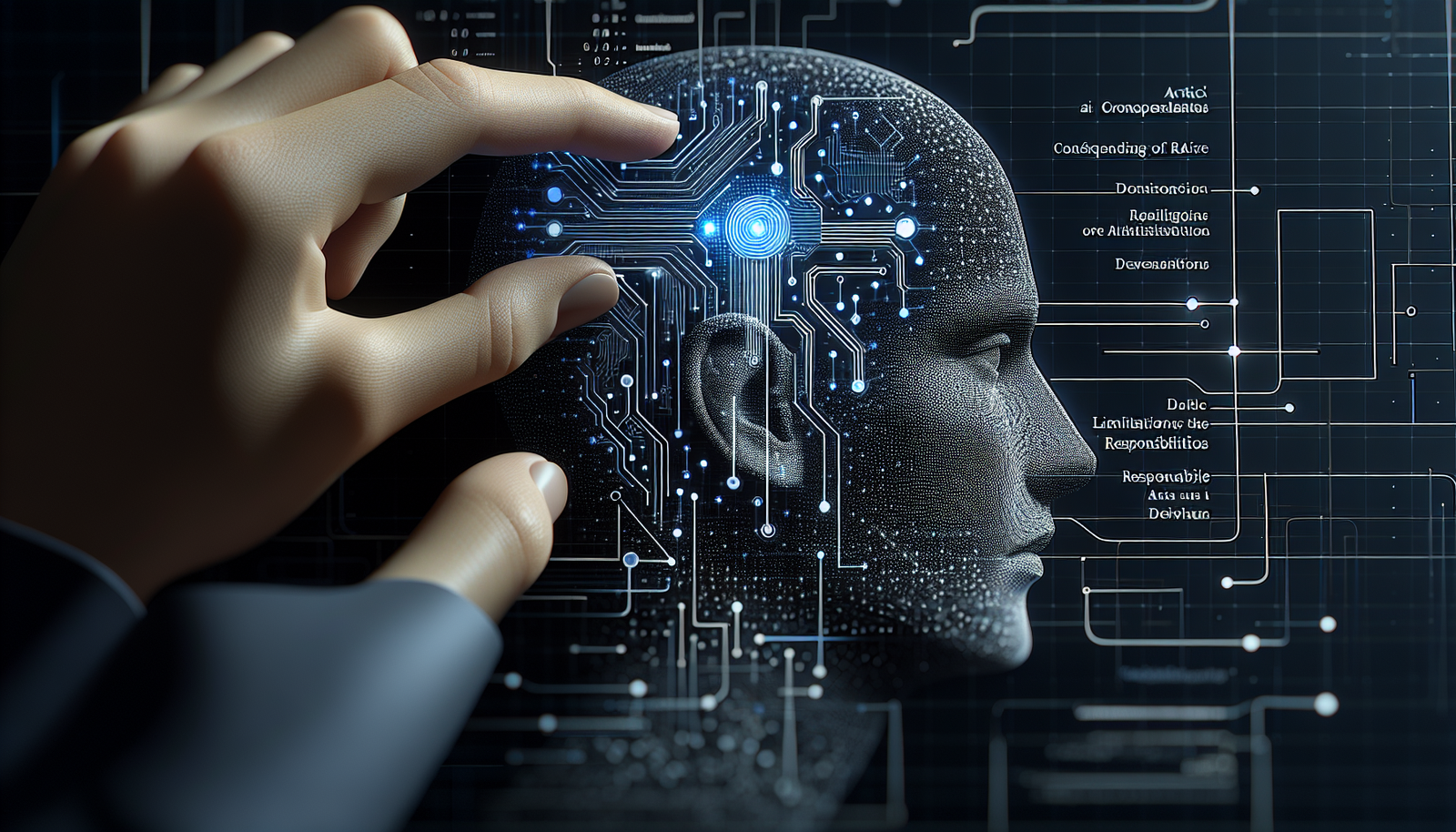

What This Means for Developers

Developers must approach Copilot with a mindset that balances innovation with caution. Here are key takeaways:

- **Critical Evaluation:** Always critically assess AI-generated code to ensure it meets project requirements and standards.

- **Supplementary Tool:** Use Copilot as a supplementary tool rather than a replacement for programming skills and knowledge.

- **Stay Updated:** Keep abreast of updates to terms of service and functionalities of AI tools to understand their evolving capabilities and limitations.

💡 Pro Insight: As AI tools like Copilot evolve, the responsibility falls on developers to maintain a high standard of code quality. Expect future iterations to include more robust validation features that aid in this effort.

Future of Copilot (2025–2030)

Looking ahead, the landscape for tools like Copilot will likely continue to evolve significantly. By 2025, we may see enhanced models that incorporate user feedback more effectively, leading to personalized coding suggestions. Improved context awareness will enable these tools to provide not just code but also architectural advice based on project specifications.

Moreover, as AI technologies mature, regulatory frameworks surrounding their use will likely become more defined, requiring developers to adhere to ethical guidelines when utilizing AI in their work. This will not only safeguard against misuse but also enhance trust in AI-generated outputs.

Challenges & Limitations

1. Misleading Outputs

Copilot can generate code that is syntactically correct but functionally incorrect, leading to potential bugs in applications.

2. Intellectual Property Concerns

Given that Copilot is trained on existing code, there are ongoing debates about the ownership of AI-generated code and potential copyright issues.

3. Dependency Risks

Over-reliance on AI tools can hinder a developer’s ability to solve problems independently, creating a skills gap over time.

4. Security Vulnerabilities

AI-generated code may inadvertently introduce security vulnerabilities, necessitating thorough reviews and testing before deployment.

Key Takeaways

- Copilot is designed for productivity, but users must validate its outputs critically.

- Microsoft’s terms of service underscore the need for cautious use of AI tools.

- Developers should view Copilot as a supplementary tool rather than a sole source of truth.

- Ongoing advancements in AI are likely to enhance the capabilities and reliability of tools like Copilot.

- Understanding the limitations of AI-generated code is essential for maintaining code quality.

Frequently Asked Questions

What is Microsoft Copilot used for?

Microsoft Copilot is an AI-powered tool that assists developers by providing code suggestions, improving coding efficiency, and enhancing productivity.

Is Copilot reliable for production code?

While Copilot can generate useful code snippets, developers should validate its suggestions against best practices to ensure reliability in production environments.

What are the limitations of AI in programming?

AI models like Copilot can produce misleading outputs, raise intellectual property concerns, and introduce security vulnerabilities if not carefully reviewed.

For ongoing updates and insights into AI and development, follow KnowLatest for the latest news and articles.

“`